-

The U.S. National AI Safety Board: Regulation Enters the Real World

•

The United States has formally established a National AI Safety Board (NAISB), an independent body modeled on the National Transportation Safety Board. Announced in early October 2025, the NAISB will investigate significant AI failures—ranging from algorithmic discrimination to catastrophic automation incidents—and publish public findings (White House, 2025). The move signals…

-

Market Disclosure and the Corporate Governance Shift

•

A quiet revolution is taking place in corporate reporting. In their 2025 third-quarter filings, companies including Microsoft, SAP, and UBS began referencing AI risk governance alongside traditional cybersecurity and ESG disclosures (Bloomberg, 2025). These mentions are brief but significant.

-

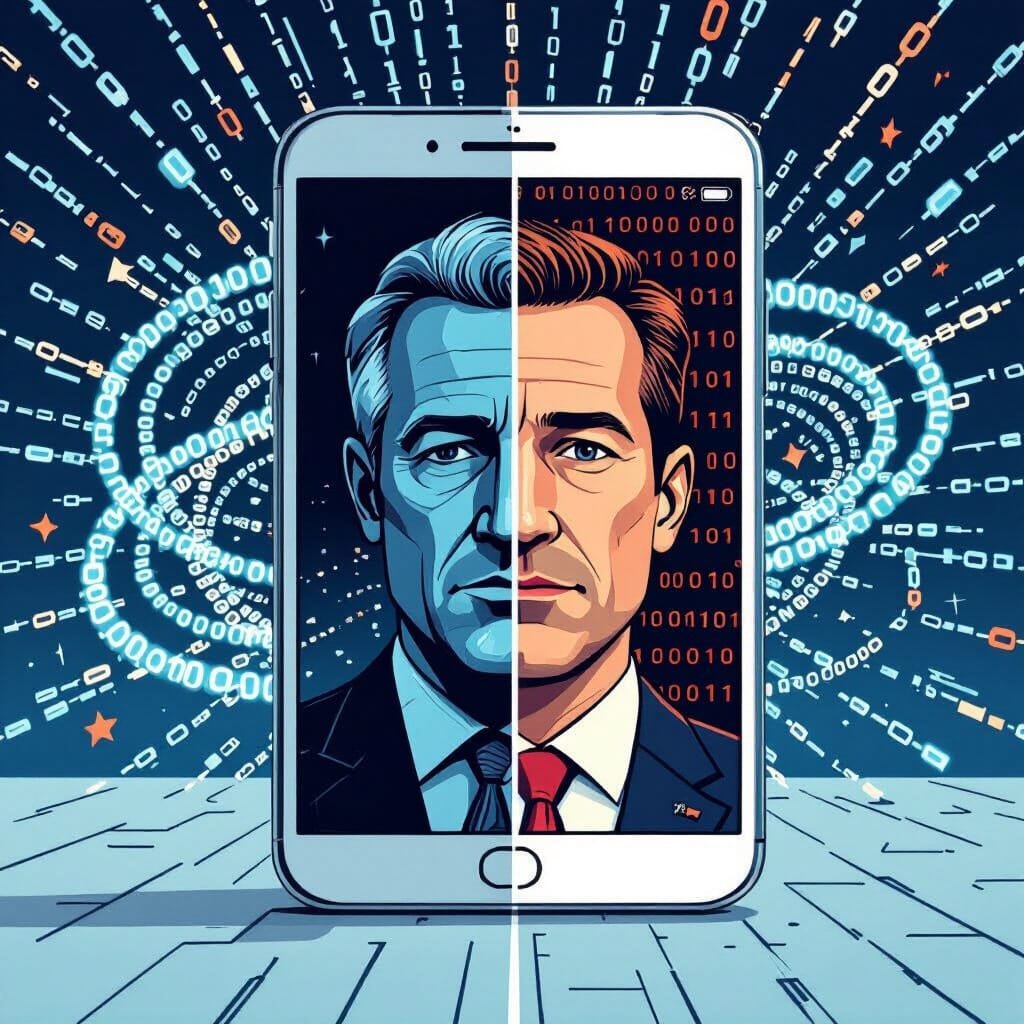

Generative AI in Media: Deepfakes, Elections, and Authenticity Liability

•

As election seasons unfold across multiple continents, lawmakers and media organizations are racing to counter an explosion of AI-generated misinformation. In September 2025, the European Parliament advanced a bill requiring labeling of synthetic political content, while the U.S. Congress is considering a similar “AI Transparency in Communications Act”.

-

AI and Defense: NATO, National Security, and the Ethics of Autonomy

•

NATO’s new Defense Innovation Charter, signed in early October 2025, requires that any AI system deployed for military decision support or targeting must be explainable and auditable (NATO, 2025). The alliance’s move reflects growing recognition that the use of AI in defense demands not only effectiveness but demonstrable ethical restraint.

-

Global Audit Momentum: Regulators Move Toward Cross-Border Oversight

•

When senior officials from the U.S. Federal Trade Commission and the European Commission met in Brussels this month, they discussed something unprecedented: cross-recognition of AI audits (Reuters, 2025). The idea that audit findings from one jurisdiction could satisfy regulators in another represents the next step in harmonizing global AI oversight.

-

AI Transparency Orders: From California to Global Policy

•

Governor Gavin Newsom’s recent executive order on AI transparency may reshape global governance faster than many expect. Signed in late September 2025, the order requires state agencies and vendors to disclose when AI systems influence public services and to publish annual transparency reports (California Governor’s Office, 2025).

-

When AI Becomes Both Shield and Target: The New Frontier of Cybersecurity

•

ENISA’s 2025 threat-landscape update and Microsoft’s mid-year report reveal that AI now serves as both defender and target. Enterprises must harden the models they deploy and treat them as critical infrastructure to sustain trust and resilience.

-

AI-Generated Misinformation and the Challenge for Democratic Resilience

•

Watchdog reports in 2025 reveal a surge of AI-driven deepfakes and synthetic news targeting elections. Platforms, regulators, and civil society face an urgent race to counter disinformation while protecting free speech.

-

AI in Public Health: Promise, Pressure, and Accountability

•

New pilots in the UK and an expanded U.S. program show how AI is reshaping public-health response—from flu forecasting to opioid-overdose prevention—while underscoring the need for transparency, validation, and public trust.

-

Mega-Partnerships and the Concentration of AI Power

•

Nvidia’s $100 billion investment in OpenAI, announced September 22, 2025, exemplifies the rapid concentration of AI capabilities among a few dominant players. While such mega-partnerships can accelerate innovation, they also heighten systemic risk and underscore the urgent need for robust governance and global accountability.